PythonCat Note: At the Python Language Summit in May, Guido van Rossum gave a talk on “Making CPython Faster” (materials here), announcing his participation in the exciting Shannon Project, which aims to improve Python performance to 5x. Recently, Guido appeared on an English-language podcast (30 minutes long) to talk about his ongoing work on high performance and answer a few questions. The podcast author put together a summary of the content, and this article is a translation of that summary. Note: Audio and transcript available for download at the end of this article

1. Why are you interested in studying the performance of Python?

Guido: In a sense, it’s a relatively comfortable topic for me because it means dealing with the core of Python, which I’m fairly familiar with. When I was at Microsoft, I briefly focused on Azure, but I realized I didn’t like that kind of work when I was at Google or Dropbox. Then I focused on machine learning, but that took a lot of time to do things that weren’t Python related, and even the parts of it that were Python related were rare.

2. What was different about Mark Shannon’s ideas about Python performance and how did he convince you to implement them?

Guido: I like the way he thinks about things. Most other approaches that focus on Python performance, like PyPy and Cinder, don’t work for all usage scenarios because they’re not backward-compatible with extensions. between minor releases (e.g. 3.8→3.9) for a number of reasons, such as new opcodes, so modifying it is a relatively safe option.

3. Can you explain to us the concept of hierarchical execution of the Python interpreter?

Guido: When executing a program, you don’t know if it will crash after a fraction of a millisecond, or if it will run for three weeks. Because for the same code, in the first case, it might trigger a bug, and if it takes three weeks to run the program, maybe it makes sense to optimize all the code to be run half an hour in advance.

But obviously, especially in a dynamic language like Python, we do as much as we can without asking the user to tell us exactly what they need to do, you just want to start executing the code as soon as possible. So if there’s a small script, or a big program, and it happens to fail in execution or exit early for some reason, you don’t have to spend time optimizing the entire code.

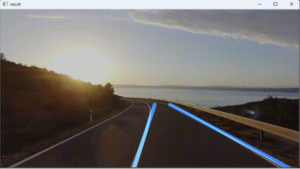

So, what we want to do is keep the bytecode compiler simple so that we can start executing the code as soon as possible. If there are certain functions that are executed multiple times, then we call them hot functions. There are various definitions of “hot”. In some cases, a function is defined as a hot function if it is called more than once, or more than twice, or more than 10 times. In other conservative cases, you might say “hot only if it is called 1000 times”.

Then, when the arguments are of certain types, the Specializing Adaptive Compiler (PEP-659 Specializing Adaptive Compiler) will try to replace some bytecode with faster bytecode. A simple hypothetical example is the plus operator in Python, which can add many objects, such as integers, strings, lists, and even tuples. However, you can’t add integers to strings.

So the optimization is to provide a separate “add two integers” bytecode, which is a second layer of bytecode hidden from the user. (The “optimization” is often referred to as speeding up quickening, but in general in our context we call it specializing). This opcode assumes that its two arguments are real Python integer objects, reads the values of those objects directly, adds the values in machine registers, and finally pushes the result back onto the stack.

The operation of adding two integers still requires type checking of the arguments. Therefore, it is not completely unconstrained, but this type checking is much faster to implement than the fully generalized object-oriented add operation.

Finally, it is possible for a function to be called millions of times with integer arguments, and then suddenly a small piece of code calls it with floating-point arguments, or worse. At this point, the interpreter will execute the original bytecode directly. This is an important part of the process, so that you always get the full Python semantics.

PythonCat Note: The ultimate goal of the Shannon Project is to layer the execution of the interpreter and make custom optimizations for the different layers. See the Github project description (https://github.com/markshannon/faster-cpython/blob/master/tiers.md) for details.

4. You usually hear about these techniques when talking about JIT (Just-In-Time) compilers, but officially Python doesn’t have them yet.

Guido: The Just-In-Time compilation scenario has a ton of emotional baggage that we want to avoid. For example, it’s not clear what exactly we’re compiling, and when. The interpreter compiles the source code into bytecode before the program starts executing, and then, in turn, converts the bytecode into specialized bytecode. This means that everything happens at some point in the runtime, so which part is called Just-In-Time (JIT)?

Also, it is often assumed that JIT automatically makes all code better. Unfortunately, you usually can’t really predict the performance of your code. Thanks to modern CPUs and their magical branch predictions, we already have enough performance. For example, we wrote a piece of code in a way that we thought would significantly reduce the number of memory accesses. However, when benchmarking it, we found that it ran as fast as the old unoptimized code because the CPU calculated the optimized access pattern without any help from us. I wish I knew what modern CPUs are doing with branch prediction and inline caching, because it’s like magic.